Neuroscience and High Performance Computing at “Shaping Europe’s Digital Future”

20 April 2018

On 19 April scientists and industrialists as well as representatives of EU institutions and ministries from South and Eastern Europe gathered in Sofia, at the Bulgarian Presidency event "Shaping Europe’s Digital Future - High Performance Computing for Extreme Scale Scientific and Industrial Applications", to discuss the central role of High Performance Computing (HPC) for many fields of European science and industry. At the event, which took place at the National Palace of Culture, the Human Brain Project (HBP) demonstrated how neuroscience and computing are major driving forces for research and innovation. In a productive cycle, they enable new insights into the brains astonishing complexity, provide the basis for new therapies, as well as progress in HPC and related fields like Artificial Intelligence.

The conference was opened by the Bulgarian Prime Minister Boyko Borissov, together with Mariya Gabriel, EU Commissioner for Digital Economy and Society, and Prof. Ivan Dimov, Deputy Minister for Education and Science. The speakers highlighted the European High-Performance Computing Joint Undertaking (EuroHPC) as a priority topic for the Bulgarian EC presidency, which started in January 2018. The initiative proposes the investment of 1 billion EUR into a world-class European supercomputer infrastructure, including two Exascale systems. In her welcoming words, the Commissioner emphasized that High Performance Computing plays a crucial role for some of the most challenging questions in science. “Just think of the challenge of understanding the human brain. This endeavor would not be thinkable without the highly advanced computing powers of these systems.”

#HPC is key technology for #DigitalTransformation of #Industry & #Society. EU’s scientific capabilities & competitiveness depend on its ability to access world-class #HPCInfrastructure. Our #EuroHPC JU is an ambitious response to ranking #EU in top position 4 #DigitalEra 🇧🇬🇪🇺 pic.twitter.com/WA1CrQJtCT

— Mariya Gabriel (@GabrielMariya) April 19, 2018

European Leadership in High Performance Computing for Extreme Scale Applications is instrumental for breakthrough enablers like @HumanBrainProj #EU2018BG @GabrielMariya brings together those serving to shape Europe’s Digital Future @PRACE_RI @FETFlagships #EuroHPC pic.twitter.com/MdVqqOfwMh

— Chris Ebell (@HBPExecDir) April 19, 2018

As a project at the interface of computing and neuroscience, the European Human Brain Project contributed central parts of the program. HBP Scientific Director Prof. Katrin Amunts hosted the session “The growing role of HPC in Neuroscience – the Human Brain Project FET Flagship”. As part of the session, Prof. Jeanette Hellgren-Kotaleski presented approaches to understand the brain using simulations and 3D-model reconstructions of tissue at the cellular level to better understand neuronal networks. Prof. Viktor Jirsa showed how so called “mean field”-models and simulations can have a direct impact on the way that patients with epilepsy are treated, thus contributing to personalized medicine. Using machine learning methods on HPC systems, Jirsa creates individual patient brain models as a new information source for neurosurgeons to help identify epileptogenic zones. Kristina Kapanova from the Bulgarian National Center for Supercomputing Applications (NCSA) spoke about the link between machine learning, brain atlas systems and simulations with NEST, one of the HBP’s major simulators. The concluding talk by Prof. Amunts showed how High Performance Computing enables the construction of the HBP’s massive multilevel 3D atlas of the brain, a major new tool to better understand levels of complex brain organization in a more integrative way. She emphasized that a new version of this atlas will be released soon to the community.

Young Investigators Perspective: Kristina Kapanova uses the #NEST-simulator for her research

— HBP HPAC Platform (@HBPHighPerfComp) April 19, 2018

Neural networks - main pillars of #MachineLearning pic.twitter.com/gH6GEsHy9t

“We have large amounts of data on many levels, from genes and molecules to the whole organ and cognitive level, but it is necessary to find new ways of making these data accessible and to link them in a meaningful way”, Amunts said. Only HPC can provide the technological basis required for this – and both sides benefit from this collaboration: “As neuroscientists we approach computing with our data and analytics needs, and in turn generate new insights into the basis of biological information processing that can help inspire novel computing paradigms.

Prof. Thomas Lippert, leader of the HBP’s High Performance Analytics and Computing (HPAC) Platform, hosted the session “HPC for extreme scale scientific applications” together with Prof. Stoyan Markov from NCSA. Among the topics highlighted was the success of the PRACE network, the Partnership for Advanced Computing in Europe. As a centerpiece for the European HPC strategy, PRACE is a major element of the HBP’s supercomputing infrastructure for neuroscience. He also hosted the panel discussion on “HPC and Future Computing Paradigms” like quantum and neuromorphic computing, where leading experts from scientific research, but also from industry like Atos and IBM Europe participated.

High-level experts from the HBP were also part of the Panel “The Future of Personalised Health and Medicine”. Professor Emrah Düzel moderated a discussion, in which questions were addressed resulting from increasing amounts of data being generated from patient studies, including those covering a large period of time as well as from cohort studies with many thousands of participants. The panelists, among them the Chair of the Science Advisory Board of the HBP, Professor, Gitte Knudsen, emphasized that new concepts and tools are necessary to make full use of these data as well as to ensure it is used in the most beneficial way for the individual patient.

Thomas Lippert @Tomtherhymer moderating great panel discussing emerging computing strategies in the next 5-10 years, based on existing and future computing platforms exascale computing, #QuantumComputing #neuromorphic computing. @EuroHpc @PRACE_RI #EU2018bg #София pic.twitter.com/kkrh1YkATL

— Chris Ebell (@HBPExecDir) April 19, 2018

At the accompanying Human Brain Project exhibition in the foyer of the conference venue, different research areas were represented. Exhibits included the neuromorphic hardware systems SpiNNaker and BrainScaleS, which are used and developed in the HBP. Visitors were able to become more familiar with research done in the HPC Platform, the Human Brain Atlas, theoretical neuroscience, simulation on large-scale brain networks, the HBP Collaboratory, and the Medical Informatics Platform but also with ethics and data governance, as well as upcoming educational events and activities in the Human Brain Project. Professor Amunts welcomed the Commissioner Gabriel at the exhibition. She expressed her deep interest in the activities of the HBP, and set aside a good deal of time for discussions with young researchers.

HBP exhibits include neuromorphic hardware, posters, demos and simulations, and a lot of informative material.

— HBP Education (@HBP_Education) April 19, 2018

Further, talks by distinguished HBP scientists are part of the program. https://t.co/EHb9VQDZuz#EURO2018BG pic.twitter.com/0RJ7SNUtnX

.@HumanBrainProj had the honor of welcoming Commissioner @GabrielMariya at the HBP exhibition at “Shaping Europe’s Digital Future” in Sofia.#EU2018BG pic.twitter.com/FQWHyy49LA

— HBP Education (@HBP_Education) April 19, 2018

We had the honour to count with the excellent presence, active participation & presentations of the @HumanBrainPrj at the #HLC on #HPC. It is inspiring to see the commitment of the scientists involved to improve the quality of medicine. Thank you pic.twitter.com/3JghpczzEw

— Mariya Gabriel (@GabrielMariya) April 19, 2018

One day earlier, the HBP Young Researchers Event 2018: Brain Models and Computation for Brain Medicine was held as a satellite event in Sofia. Renowned experts working in the various research areas of the Human Brain Project introduced participants to elements of brain modelling tools both from software and hardware perspectives. Topics covered included the HBP human brain atlas, the use of HPC brain models for clinical applications, the HBP Medical Informatics Platform, the HBP Collaboratory, the HBP infrastructure provided by Fenix and the High Performance and Analytics & Computing Platform, neuronal network simulations, neuromorphic computing as well as ethics and data governance in the HBP. In addition, there was a panel discussion on what can be expected from data science and simulation for brain medicine. 60 participants participated in the event, including members of the local scientific community, but also researchers from Eastern and Southern European Countries.

Shaping Europe’s Digital Future served as an inspiring meeting point for Europe’s plans and ambitions in HPC. “Together we made step forward for making Europe the leader in HPC “, said Commissioner Gabriel in her concluding statement. “The achievements of the Human Brain Project and its goal to share them and encourage future generations of researchers are wonderful illustrations of what we can achieve together”.

The European High-Performance Computing Joint Undertaking - EuroHPC

Further Information: Examples for the productive relationship between 21st century neuroscience and High Performance Computing in HBP

1. Atlasing: Taming the Brains Complexity with High Performance Computing

With new high-resolution and high-throughput methods neuroscientists have been able to generate massive amounts of information about the healthy and diseased brain. But so far, the wealth of data in neuroscience has by far surpassed the ability to integrate and make sense of it. The HBP’s strategy is to use advanced computational methods to integrate the enormous amounts of data into a common framework, and to process and analyze it for getting a better understanding of the multilevel organization of the brain. High Performance Computing (HPC) has to be an integral part of this.

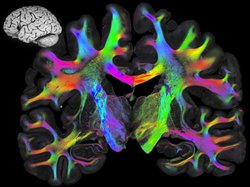

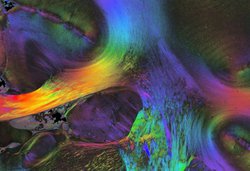

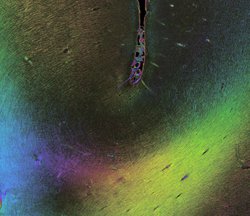

One example for such a high-resolution method is Polarized Light Imaging, a technique to investigate the “Connectome”, the sum of all connections in the brain, from the tiniest neuronal branches up to large networks. Neuronal connections are the “roads”, on which information is traveling throughout the brain, and PLI shows these connections in exquisite detail. However, displaying and analyzing this amount of data would be impossible without HPC. The image of a single brain section with all its connections has 120,000 x 80,000 pixels. The raw data of such a section already requires about 750 GB of storage, and thus one or two of them would fill up the storage of a standard computer immediately. Thousands of these images are necessary to create a model of connectivity for one single brain. Other approaches in neuroscience produce datasets that are as big or bigger.

Understanding the brain organization requires investigating data at many different levels, ranging from information about genes, molecules and receptors, cells, microcircuits, long-range connections and networks including the Connectome, regional characteristics and whole brain maps, cognition and behavior, and to analyze all of it in relation with each other.

The HBP Human Brain Atlas allows to integrate large datasets into the most comprehensive atlas of the human brain to date. Neuroscientists and supercomputing experts work together to let these two fields of research productively grow together, creating the technology and workflows for investigating the brain with High Performance Computing. The resulting atlas and analytics tools are available to researchers around the world through their web browser.

The multilevel models resulting from the atlas data can then be tested in simulations, where they are “put in motion”, and the results are fed back to empirical research, inspiring new experimental questions. Only High Performance Computing can enable this way of dealing with the data challenge posed by the brains complexity.

In such a process, computing experts learn to set up new workflows, taking into account the specific requirements of the neuroscience community, such as making the HPC resources and tools accessible from web browsers (other research communities typically use these systems in “batch mode”, i.e. non-interactively). The HPC experts open up the High Performance Computing and data infrastructure for a new research community, co-designed by the scientists: Neuroscience provides use cases, which help to develop new technologies that will also benefit other communities, e.g. with respect to memory architecture or towards interactive supercomputing – a win-win situation for both communities.

Pictured above: PLI-Images. Find information on the collaboration between neuroscientists and supercomputing experts in processing 3D-Polarized Light Imaging data here: https://hbp-hpc-platform.fz-juelich.de/?page_id=794

Pictured above: PLI-Images. Find information on the collaboration between neuroscientists and supercomputing experts in processing 3D-Polarized Light Imaging data here: https://hbp-hpc-platform.fz-juelich.de/?page_id=794

Example for PLI mapping: Zeineh et al; Direct Visualization and Mapping of the Spatial Course of Fiber Tracts at Microscopic Resolution in the Human Hippocampus, Cerebral Cortex, 1 March 2017, pp 1779–1794, https://doi.org/10.1093/cercor/bhw010

2. Progress in Brain Modelling and Simulation

New algorithms for large-scale brain simulations of networks and plasticity

16 02 2018 – Researchers of Forschungszentrum Jülich, Germany, RIKEN, Kobe and Wako, Japan, and the KTH Royal Institute of Technology, Stockholm, Sweden, have made a decisive step towards creating the technology to achieve simulations of brain-scale networks on future supercomputers of the exascale class. Simultaneously, the new algorithm significantly speeds up brain simulations on existing supercomputers. Read more: https://www.humanbrainproject.eu/en/follow-hbp/news/an-algorithm-for-large-scale-brain-simulations

Another HBP team has developed an algorithm to simulate the structural plasticity of the brain at an unprecedented scale. The neural network of the brain is not hardwired. Even in the mature brain, new connections between neurons are formed and existing ones are deleted. This phenomenon called “structural plasticity” is crucial for learning, memory and rehabilitation after injury, for example in stroke.

Sebastian Rinke from TU Darmstadt, Germany and his colleagues present a scalable approximation algorithm for modelling structural plasticity. The team demonstrates scalability for up to a billion neurons. Moreover, performance extrapolations suggest that given a sufficiently large machine the algorithm can even simulate neuron counts as found in the human brain, which is around 100 billion.

Original publications:

Jakob Jordan et al.: Extremely Scalable Spiking Neuronal Network Simulation Code: From Laptops to Exascale Computers. Front. Neuroinform., 16 February 2018 https://doi.org/10.3389/fninf.2018.00002

SebastianRinke et al.: A scalable algorithm for simulating the structural plasticity of the brain. Journal of Parallel and Distributed Computing., available online 7 December 2017

https://doi.org/10.1016/j.jpdc.2017.11.019

3. Application example in Medicine

Epilepsy: Building Personalised Models of the Brain

Human Brain Project scientist Viktor Jirsa is the head of a team creating personalised brain models for patients with intractable epilepsy. The project EPINOV, short for “Improving EPilepsy surgery management and progNOsis using Virtual brain technology”, is testing the use of brain modeling to improve epilepsy care in a large clinical study. The project is based on the macroscale brain simulation technology developed by Jirsa and his collaborators in “The Virtual Brain” project and has the objective to create personalized brain models of patients to help clinicians plan surgery strategies. The virtual brain approach rests on the fusion of nonlinear dynamic modelling of brain region activity, network connectivity derived from a patient’s brain images and computational large-scale brain simulations. High Performance Computing enables the personalisation of the brain network models through the application of machine learning and discovery of novel therapeutic interventions through high-dimensional brain network simulations and parameter sweeps. A pilot study showed promising results with a small cohort of retrospective patients suffering from pharmaco-resistant epilepsy. In a world’s first, this personalized brain modelling approach now provides the basis for a large-scale clinical trial with prospective patients in eleven French hospitals. A consortium has taken up preparatory work at the beginning of 2018. The clinical testing is planned to begin in January 2019.

In this article on the HBP website, Viktor Jirsa explains the process and how the HBP’s brain models and cross-disciplinary collaborations are central to a new way of targeting epilepsy surgery. https://www.humanbrainproject.eu/en/follow-hbp/news/the-power-of-a-personalised-model-of-the-patients-brain/

4. How neuroscience impacts HPC:

HPC and data technologies become more and more available for and adapted to the needs of neuroscience.

The High Performance Analytics and Computing (HPAC) Platform of the Human Brain Project builds the computing and data infrastructure for the HBP together with the Fenix project (https://fenix-ri.eu/), driven by the requirements of the neuroscience community that go beyond the demands of other communities. The HPAC Platform is built by five leading European supercomputing centres, i.e. Barcelona Supercomputing Centre in Spain, the TGCC of CEA in France, Cineca in Italy, the Swiss National Supercomputing Centre and the Jülich Supercomputing Centre in Germany.

All what makes neuroscience different from other communities could be summarised with “interactivity”. Tools for working with the brain atlases, for instance, need fast and efficient access to a huge amount of data. From a technological perspective, this means on the one hand that storage and memory hierarchies as well as smart algorithms are needed to enable fast enough and thus truly interactive access to the datasets. On the other hand, also interactive computing resources are needed, on which the tools can run. In addition, the data needs to be stored in close proximity to the interactive and large-scale compute resources. This also enables other usage scenarios, for example connecting a visualisation or analysis tool to a large-scale simulation running on a supercomputer, to control if the network evolves as expected or to re-configure the simulation at runtime. The Fenix infrastructure takes these aspects into account, focusing on a federated data infrastructure and interactive computing services as well as elastic access to scalable compute resources. The HPAC Platform develops the software layer that makes this complex, federated infrastructure easily usable in the intended way. A key role is played by the UNICORE middleware that allows to access the infrastructure from the HBP Collaboratory, the project’s web portal. The solutions and new technologies developed will also benefit other communities that use the capabilities of these facilities for their research.

Katrin Amunts @HumanBrainProj : sharing of #humanbrain #data has been the driver 4 the development of #standards & #infrastructure - real case-driven development is key, agree! Looking 4 #brain related resources? https://t.co/vCEqQy4vig… #RDAPlenary #FAIRdata @FAIRsharing_org pic.twitter.com/97bXvpg3yf

— SAS (@SusannaASansone) March 21, 2018

HBP Pilot system JURON is in top 5 of the new IO500 ranking for supercomputers

In the first project phase, the HPC experts in the HBP worked together with industry partners in the context of a Pre-Commercial Procurement to develop prototype HPC systems based on the requirements of the neuroscience community. The two pilots JURON, developed by IBM and NVIDIA, and JULIA, developed by Cray, are available to HBP scientists since 2016. Both were built and designed specifically for neuroscience purposes, with a focus on dense memory integration, scalable visualisation and dynamic resource management. They are maintained at the Jülich Supercomputing Center in Germany. JURON scored in the top of the IO500 list, a new ranking for supercomputers, which takes into account increasing complexity of HPC data management and evaluates systems on different benchmarks. JURON’s BeeGFS filesystem took top-ranking in the different per-client measurements. In the overall ranking based on bandwidth, it reached rank five. Further Info:

http://www.fz-juelich.de/portal/EN/Research/ITBrain/Supercomputer/JULIA_JURON/_node.html

Congrats to @fzj_jsc team for achieving the best per-client performance with their BeeGFS file system on Juron in the new #IO500 list at #SC17. Whitepaper for Juron is here: https://t.co/hTDo9EiCRe pic.twitter.com/D0KzYVuq5d

— BeeGFS (@BeeGFS) November 16, 2017

5. Conceptual foundations:

HBP addresses High Performance Computing for neuroscience

02. 11. 2016 – In a recent article in the journal Neuron, HBP researchers have contributed to defining the role of High Performance Computing (HPC) for the future of neuroscience. Technical and conceptual advances in neuroscience, fueled by a number of multi-national initiatives in brain science including the Human Brain Project, have accelerated the field at an impressive pace. These advances give rise to unprecedented large and diverse datasets covering structural and functional data, innovative data modalities on multiple spatial and temporal scales, and detailed models to simulate realistic dynamics of brain networks. The complexity of such data and simulations dramatically increase the importance of making available the infrastructure and technology to harvest the full potential of these achievements by means of HPC. This issue is addressed by a recent opinion article, co-authored by a consortium of researchers working on the forefront of making HPC resources available to neuroscience, including Markus Diesmann and Michael Denker representing the HBP. The authors formulate a set of "Grand Challenges" that HPC needs to urgently address in the following years. The article stresses the importance of tackling these endeavors as an international, concerted activity, embracing standardization efforts to facilitate highly collaborative and reproducible simulation and data analysis with the help of tomorrow's computer technology.

Click here to read the paper published in Neuron: http://www.cell.com/neuron/fulltext/S0896-6273(16)30785-1

Key HBP roadmap article published in Neuron

02. 11. 2016 – The November issue of the Neuron (Volume 92, Issue 3, 02 November 2016) features a key article presenting the roadmap for HBPs future direction. It describes new strategic objectives first announced at the HBP Summit in Florence in October 2016. HBP is creating a European research infrastructure to examine the most complex processes and structures of the brain using detailed analyses and simulations. The article explains how the Project is rooted in Information and Communications Technology (ICT), particularly in terms of the integrated system of the HBP Platforms and the services they offer to users. The roles of the Neuroinformatics Platform as the core of the IT architecture and of the High Performance Analytics and Computing Platform as the main hardware support are particularly considered.

https://www.humanbrainproject.eu/en/follow-hbp/news/key-hbp-article-published-in-neuron/