Order from chaos, chaos from order

19 December 2019

The brain's yet unrivaled ability to process complex, but noisy and incomplete sensory data continues to be an important source of inspiration for the development of artificial intelligence.

The brain's yet unrivaled ability to process complex, but noisy and incomplete sensory data continues to be an important source of inspiration for the development of artificial intelligence. At the same time, the manifest complexity of its spiking activity makes it an extremely difficult object to study, let alone to copy. In particular, it remains unclear how and why evolution has chosen spiking neurons as the elementary units of cortical communication and computation. In a series of publications, a team of researchers from the Human Brain Project led by Dr. Mihai Petrovici have come a step closer to elucidating this mystery and replicating this aspect of cortical computation in so-called neuromorphic hardware. These findings are owed to a joint effort across three European institutions: the Department of Physiology at the University of Bern, the Kirchhoff-Institute for Physics at the University of Heidelberg and the Institute for Computational, Systems and Theoretical Neuroscience at the Jülich Research Center. The young researchers in the team were also recently awarded a prize for their achievements.

By strictly differentiating between two possible states - a neuron either spikes or it doesn't - cortical neurons naturally lend themselves to the representation of discrete variables. At any point in time, the activity of a cortical network can then be interpreted as a high-dimensional state, a snapshot within a vast, complex landscape of possibilities. After repeated observation of sensory inputs, spiking networks can learn to produce snapshots that are increasingly compatible with their sensory stimuli.

<iframe allowfullscreen="true" frameborder="0" height="205" src="https://www.youtube.com/embed/QYMiBd0yCXw" style="max-width:100%;" width="365"></iframe>

Figure taken from Akos Kungl et al., Accelerated physical emulation of Bayesian inference in spiking neural networks, Frontiers in Neuroscience, 2019

Thus, these networks can learn an internal probabilistic model of their environment, an ability that is critical for survival and therefore likely to be favored by evolution.

<iframe allowfullscreen="true" frameborder="0" height="205" src="https://www.youtube.com/embed/lTk0PVNFtWg" style="max-width:100%;" width="365"></iframe>

Figure taken from Akos Kungl et al., Accelerated physical emulation of Bayesian inference in spiking neural networks, Frontiers in Neuroscience, 2019

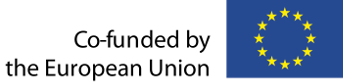

Recently, Akos Kungl et al. have been the first to replicate these computational principles on highly accelerated spiking neuromorphic hardware, using the BrainScaleS-1 system.

Figure taken from Akos Kungl et al., Accelerated physical emulation of Bayesian inference in spiking neural networks, Frontiers in Neuroscience, 2019

Spikes are not only useful for representing states, but also for switching between alternative interpretations of sensory input. When learning from data, networks tend to form strong opinions - they learn to only occupy a very small subset of similar states and ignore all other possibilities. The solution is provided by a ubiquitous feature of physical systems: the finiteness of their resources. With each spike that travels between two neurons, the synapses connecting them use up a portion of their stored neurotransmitters and thus weaken temporarily, a phenomenon known as short-term plasticity. This in turn weakens the currently active ensemble of neurons, allowing the spiking network to escape from its current attractor and travel much more efficiently around the landscape of possible states, as first demonstrated by Luziwei Leng et al.

Figure taken from Luziwei Leng et al., Spiking neurons with short-term synaptic plasticity form superior generative networks, Scientific Reports, 2018

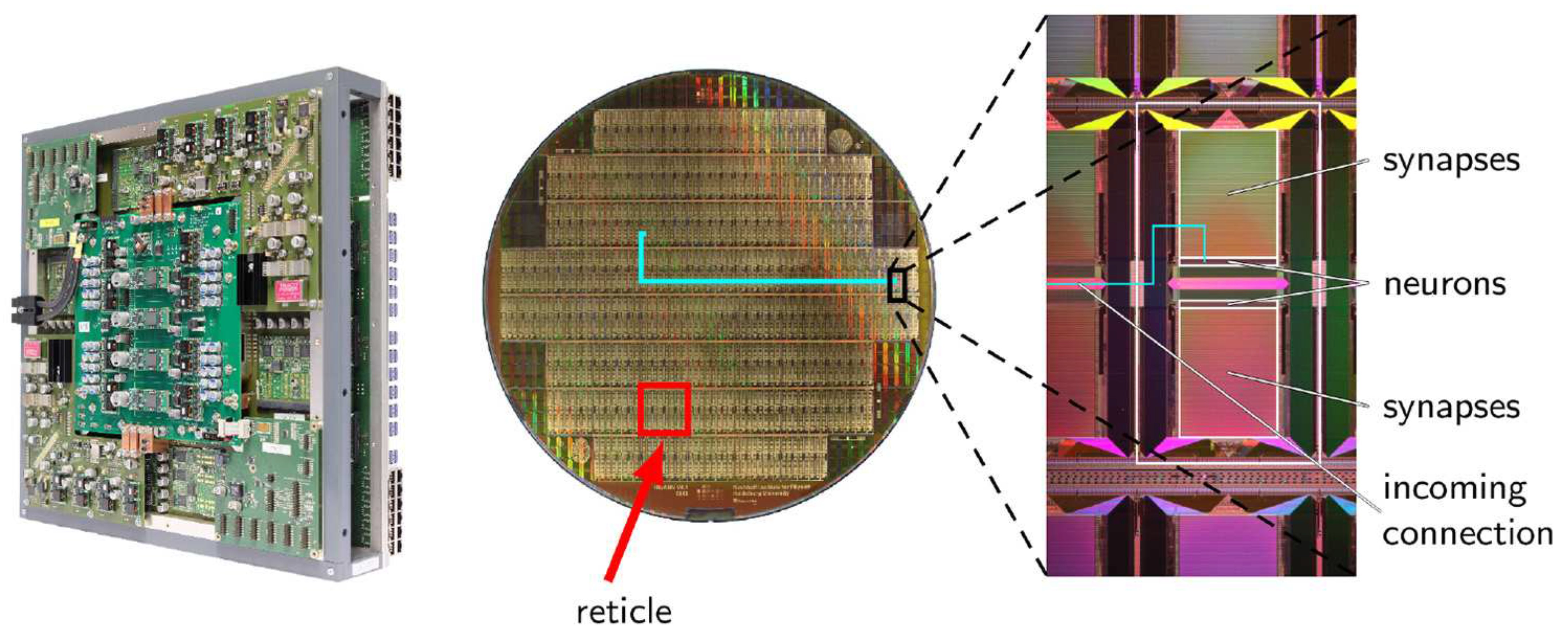

While the assumption of stochastic neuronal spiking underlying the proposed probabilistic representation is well-supported by experimental evidence, it remains unclear how such activity can arise from otherwise deterministic components. While chaos can easily arise from complex deterministic systems, it is much less clear how such a system can still maintain an internal order and continue a meaningful processing of information. Two recent studies have proposed elegant and efficient possible solutions to this problem in spiking neural networks. By harnessing the internal correlations in recurrent networks, Jakob Jordan et al. have shown how even small reservoirs of excitatory and inhibitory neurons can provide the correct noise statistics for precise spike-based sampling.

Figure taken from Jakob Jordan et al., Deterministic networks for probabilistic computing, Scientific Reports, 2019

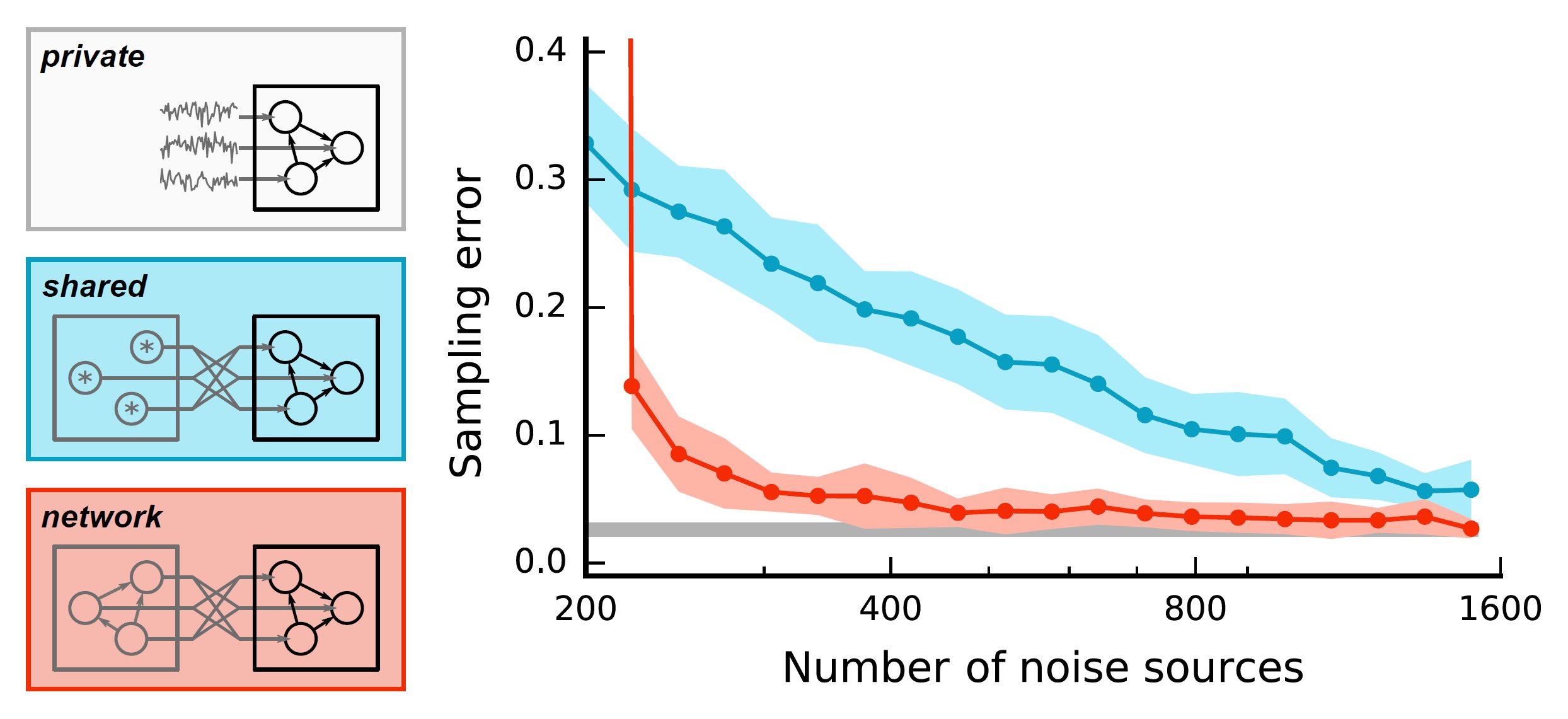

Furthermore, Dominik Dold et al. have shown how ensembles of sampling networks can learn to use each other's activity as an effective form of noise, removing the need for external noise sources altogether.

<iframe allowfullscreen="true" frameborder="0" height="205" src="https://www.youtube.com/embed/LVloxOFdW-c" style="max-width:100%;" width="365"></iframe>

Figure taken from Dominik Dold et al., Stochasticity from function - why the Bayesian brain may need no noise, Neural Networks, 2019

These represent important insights for understanding not only stochasticity in the brain, but also for building artificial systems that operate following the same underlying principles.

Figure taken from Dominik Dold et al., Stochasticity from function - why the Bayesian brain may need no noise, Neural Networks, 2019

Publications:

- Luziwei Leng et al., Spiking neurons with short-term synaptic plasticity form superior generative networks

- Jakob Jordan et al., Deterministic networks for probabilistic computing

- Dominik Dold et al., Stochasticity from function - why the Bayesian brain may need no noise

- Akos Kungl et al., Accelerated physical emulation of Bayesian inference in spiking neural networks

Prize:

In other media: