“Noisy” Chips: Insights from Brain Research Offer Benefits for Neuromorphic Hardware

10 March 2020

Neuromorphic chips modelled on the human brain have enormous potential, offering a promising and efficient alternative for artificial intelligence (AI) tasks in particular. However, a number of questions have yet to be answered, not least because the mechanisms and principles that make the original model – our brain – so efficient remain unclear to this day.

Scientists from Forschungszentrum Jülich together with their partners from the Human Brain Project have now shed light on an aspect of biological information processing that had previously remained a mystery. They set out to discover which mechanism in both the brain and neuromorphic chips creates a form of “excitatory background noise” that is necessary for certain types of computations in neuronal networks. Physicist Dr. Tom Tetzlaff from Jülich’s Institute of Neuroscience and Medicine (INM-6) played a key role in the investigations.

What makes neuromorphic hardware different from conventional computer chips?

Conventional computers can complete certain tasks very quickly – for instance, performing computations involving huge numbers or storing and retrieving large volumes of data. Everyday tasks, however, often pose other kinds of problems, such as recognizing objects or predicting events in a constantly changing natural environment that is subject to disturbances. Moreover, the computers must learn new things from a small number of training examples.

In this regard, biological brains – and biological, intelligent systems in general – are vastly superior to traditional computers. This is particularly evident in terms of energy efficiency: while some supercomputers require as much energy as a small town, our brain only uses about as much as a light bulb. That’s the goal we want to achieve.

Why does the combination of neuromorphic computing and artificial intelligence hold so much interest?

Artificial intelligence is based on algorithms that resemble the way information is processed in the brain. These artificial neuronal networks are already being used in a wide range of practical applications. At the moment, these applications are normally run on traditional computers that can be used for a wide range of operations rather than being optimized for this particular purpose. In contrast, the premise behind neuromorphic computers is that you develop dedicated hardware to support these specific algorithms. As a result, the hardware is more energy-efficient, faster, and more robust, meaning it can also be used for mobile applications, for example.

What kinds of application are viable?

Examples are applications designed to find patterns in huge volumes of data, for purposes such as the early diagnosis of particular diseases, or robotics applications. Another important field of application is neuroscience as a whole. Neuromorphic architectures have the potential to be very fast – much faster than real time.

The problem is that although today’s supercomputers do allow us to simulate large areas of the mammalian brain, they do so very slowly. As a result, we can only observe processes in the brain for a very short time, i.e. a few seconds. However, many processes – such as brain plasticity, adaptation, and development – take place over much longer time scales, lasting minutes, hours, or even days.

What approaches have been developed in neuromorphic hardware?

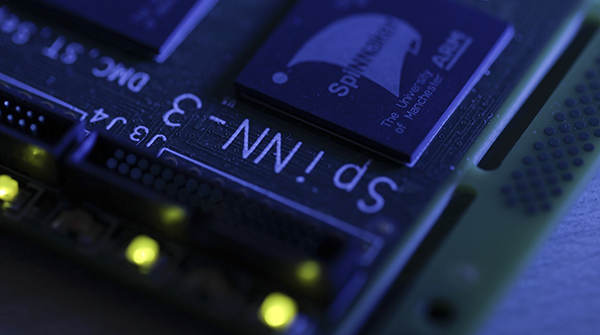

One example is the BrainScaleS system developed by our partners in Heidelberg, which models neurons using analogue electronic circuits and can run up to 10,000 times faster than real time. A contrasting approach is offered by the SpiNNaker system designed by our Manchester partners, which is purely digital. The advantage of this system is that it is very flexible and, in principle, freely programmable, but it is somewhat slower. SpiNNaker is designed for real-time applications in fields such as robotics.

A wide range of other systems also exist, such as IBM’s TrueNorth or Intel’s Loihi. Most of these systems focus on the field of AI, while others are designed explicitly for neuroscience applications.

SpiNNaker module designed by partners from the University of Manchester.

Copyright: Forschungszentrum Jülich

What do you see as the biggest obstacles to practical application?

One of the main problems right now is the question of what we actually want to achieve. The basic idea is to draw inspiration from the laws of nature to create efficient systems. At the moment, however, we do not know precisely which biological principles hold the key to the brain’s efficiency. Although there are lots of ideas, there is no clear consensus on what is necessary for this.

You and your team recently succeeded in shedding light on an interesting aspect. You set out to determine how stochastic computations can be performed using biological neuronal networks. What exactly did this involve?

Our work focused on the concept of probabilistic computing. The aim here is not to find a single optimal solution, but also numerous alternatives. In addition, we need to be able to assess how reliable or risky each solution is. Specific neuronal networks exist for this type of problem, and in order to function, they require what is known as intrinsic stochasticity.

These networks can have different states – to visualize these, imagine a state as a spherical ball in a landscape of mountains and valleys. If you place the ball at a certain start point, it will fall into the next valley and stay there. We can arrive at this single solution relatively quickly. But if we want the ball to reach several other valleys, we have to introduce another element – a type of noise, for example – to move the ball between the different mountains and valleys. This gives us a picture of all possible solutions as well as a measure of how good or reliable each one is, based on how often we get stuck in a valley. For this to really work, the noise has to meet certain quality requirements.

What new insights did you gain?

Up to now, performing probabilistic computing on neuromorphic hardware required special additional hardware with pseudorandom number generators to produce this noise. Quite apart from the technical complexity this involved, there is still the question of which mechanism in nature is responsible for this stochasticity. You see, the behaviour of individual biological cells in isolation is deterministic rather than stochastic. This means that if we know their initial state, we can predict their behaviour.

Various biologically plausible mechanisms have been suggested over the years, and we have now proposed another candidate. We showed that, because of their dynamics, neuronal networks found in the cerebral cortex produce an activity that is very well suited for use as a noise source for probabilistic computations. These networks can be implemented on neuromorphic hardware directly, i.e. without any additional hardware, and very efficiently – a single network of a few hundred cells can supply high-quality noise for a large number of very large networks.

What applications is this kind of probabilistic computing relevant for?

One example is medical diagnosis, for instance to provide an early diagnosis of cancer based on imaging data. For patients, this information is of huge significance, and therefore it is extremely important to be able to judge how reliable a particular diagnosis is. At a certainty of 98 %, it’s clear that the situation is serious. At 60 %, on the other hand, it’s a different matter.

Another example from research is the “travelling salesman” problem. If someone needs to go to several places, the objective is to minimize the total distance to be travelled. Solving this type of problem requires numerous constraints to be satisfied, which makes it very difficult. With many applications involving navigation, an alternative solution is needed fast in the event that something unexpected happens. This requires a probabilistic approach.

One final question. Do you believe that neuromorphic chips will be as widely used as traditional computer chips in future?

I believe that at some point in the future we will indeed be using a different kind of hardware, on both computers and smartphones, that is optimized for AI applications and artificial neuronal networks. As I mentioned before, however, one of the main problems we are currently facing is that we do not know exactly what is needed to make these systems highly energy-efficient, robust, and fast. As a result, our current research is focused on systems for neuroscience applications specifically. On top of that, our goal here at Jülich is not to build a standalone neuromorphic hardware system such as Heidelberg’s BrainScaleS system; instead, we intend to develop neuromorphic components that can be combined with conventional computers, such as an accelerator card or an accelerator module.

Glossary:

|

Neuromorphic chip |

A neuromorphic chip is a microchip modelled on the principle of natural neuronal networks in the brain. |

|

Artificial neuronal network |

Artificial neuronal networks are abstract models of neuronal networks that are inspired by the human brain and can be used for machine learning and artificial intelligence tasks. |

|

Deterministic vs. stochastic systems |

In principle, the behaviour of deterministic systems can be deduced from a previous state, while that of stochastic systems cannot. The key factor is the degree of “predeterminedness” of the system. In deterministic systems, the transition between one state and the next must take place, but in stochastic systems it is merely probable that it will take place.

|

|

Probabilistic computing |

Probabilistic approaches do not use fixed values and objects such as 0 and 1; instead, they use random objects that occur with a certain probability. This method is beneficial when trying to obtain an overall picture of variable solutions, for uses such as risk assessments or optimizing specific processes or routes. It is also used for interpreting input data that is incomplete or contains disturbances, for instance in the field of image and object recognition.

|

|

Stochastics, stochasticity |

An event or result is described as “stochastic” when it does not always occur, or only occurs occasionally, when the same process is repeated. As a result, the occurrence of this event cannot be predicted on an individual basis, a principle known as stochasticity.

|

Original publication:

Deterministic networks for probabilistic computing

Jakob Jordan, Mihai A. Petrovici, Oliver Breitwieser, Johannes Schemmel, Karlheinz Meier, Markus Diesmann, Tom Tetzlaff

Scientific Reports (4 December 2019), DOI: 10.1038/s41598-019-54137-7

Original source:

“Noisy” Chips: Insights from Brain Research Offer Benefits for Neuromorphic Hardware on the Forschungszentrum Jülich website by Tobias Schlößer (republished with permission)

Further information:

- Order from chaos, chaos from order, online article published by the Human Brain Project, 19 December 2019

- Institute of Neuroscience and Medicine, Computational and Systems Neuroscience (INM-6 / IAS-6)

Contact:

Dr. Tom Tetzlaff

Institute of Neuroscience and Medicine, Computational and Systems Neuroscience (INM-6 / IAS-6)

Tel.: +49 2461 61-85166

E-Mail: t.tetzlaff@fz-juelich.de