Computing, Cloud, Storage and Network Services

Contact us

Contact our support here or email us at support@ebrains.eu.

Find us on EBRAINS.eu

Learn more about our computing and storage resources

Community Space

Join our High Performance Computing (HPC) and Cloud Computing Community Space in the EBRAINS Community

Social Media

We were active on Twitter/X under @HBPHighPerfComp during the HBP.

Computing, Cloud, Storage and Network Services for EBRAINS

The High-Performance Computing (HPC), Cloud Computing, Storage and Network Services of the Fenix infrastructure are operated by and integrated in the distributed HBP and EBRAINS infrastructure. Additionally developed services, such as data or monitoring services, make them an integral part of the EBRAINS infrastructure as its backbone.

The services can be used for a variety of use cases, from developing and hosting platform services on Virtual Machines, to modelling, simulation and data analysis tasks, as well as for training and education.

Shiting Long (JUELICH) and Javier Bartolomé (BSC) about their contributions to the EBRAINS Computing Services:

Services available

Which services are available?

The EBRAINS Computing Services developed, deployed, integrated and operated a variety of basic IT services within the distributed HBP and EBRAINS infrastructure, including in particular the federated HPC, Cloud, storage and network infrastructure, building on resources and services of the Fenix infrastructure that were made available through the ICEI project. Together with Fenix, the EBRAINS Computing Services provided the computing and storage backbone of the EBRAINS infrastructure.

Fenix infrastructure services

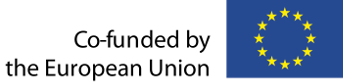

Within the Fenix infrastructure, six European supercomputing centres have agreed to align their services. The distinguishing characteristic of this e-infrastructure is that data repositories and scalable and interactive supercomputing systems are in close proximity and well-integrated. An initial version of this infrastructure was realised by BSC (Spain), CEA (France), CINECA (Italy), ETHZ-CSCS (Switzerland) and JUELICH-JSC (Germany) in the ICEI project (Interactive Computing E-Infrastructure), which was of the HBP and finished in September 2023.

The Fenix infrastructure offered the following services at the end of the ICEI project:

- Interactive Computing Services: Quick access to single compute servers to analyse and visualise data interactively, or to connect to running simulations, which are using the scalable compute services.

- Scalable Computing Services: Massively parallel HPC systems that are suitable for highly parallel brain simulations or for high-throughput data analysis tasks.

- Virtual Machine Services: Service for deploying virtual machines (VMs) in a stable and controlled environment that is, for example, suitable for deploying platform services like the HBP Collaboratory, image services or neuromorphic computing front-end services.

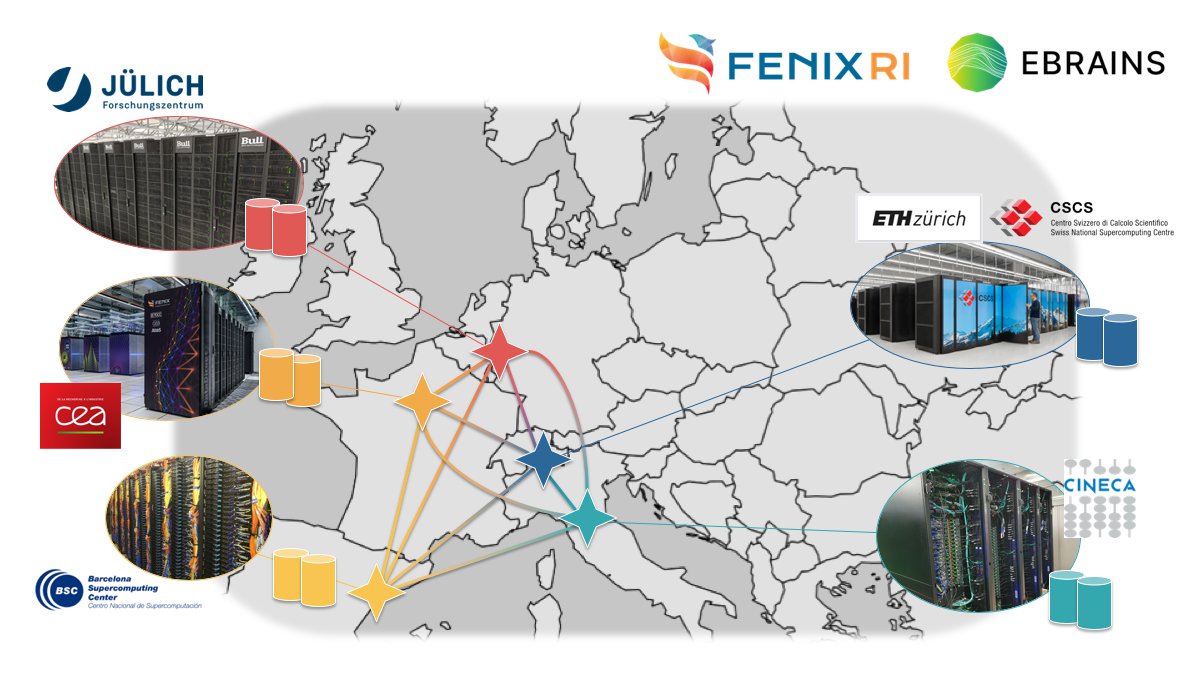

- Active Data Repositories: Site-local data repositories close to computational and/or visualisation resources that are used for storing temporary replicas of data sets.

- Archival Data Repositories: Federated data storage, optimised for capacity, reliability and availability that is used for long-term storage of large data sets which cannot be easily regenerated. These data stores allow the sharing of data with other researchers inside and outside of HBP.

Another important focus was the adaptation of the generic Fenix infrastructure services to the specific needs of EBRAINS, e.g., the integration between the Fenix and EBRAINS Authentication and Authorization Infrastructure (AAI) and the Fenix User and Resource Management Service (FURMS).

Additional infrastructure services

While the ICEI project focused on the procurement of hardware and a few developments of generic (i.e., not community-specific) solutions, such as FURMS, the EBRAINS Computing Services operated these services and linked them to the EBRAINS infrastructure by developing, enhancing and operating additional services as required by the other parts of the HBP and the EBRAINS user communities.

Additionally, the EBRAINS Computing Services developed, deployed and operated a variety of different services for EBRAINS, which could also be useful for other communities:

- Central Data Transfer Services: for transferring data between Active or Archival Data Repositories at different Fenix sites (more information)

- Central Data Location Service: service integrated with the Knowledge Graph to find, download, upload and share data

- Infrastructure Monitoring Service: a production-level service for monitoring the operation of the distributed e-infrastructure services and for incident reporting (more information)

- Slurm plugin for resource co-allocation: co-allocating compute and high-performance storage resources in a multi-tiered storage HPC system (more information)

Enabling EBRAINS

The systems and services operated by EBRAINS Computing Services enabled the platform services layer to integrate different EBRAINS services within complex workflows. The services of this infrastructure layer thus served within the HBP and EBRAINS as a basis for the EBRAINS Data Services and EBRAINS Modelling Services and could, of course, also be used directly by end users. The work was mainly focused on the operation of the infrastructure services to ensure a high quality of service that can be achieved, e.g., by providing robust operational environments and establishing mechanisms that allow for timely identification of problems.

Getting access

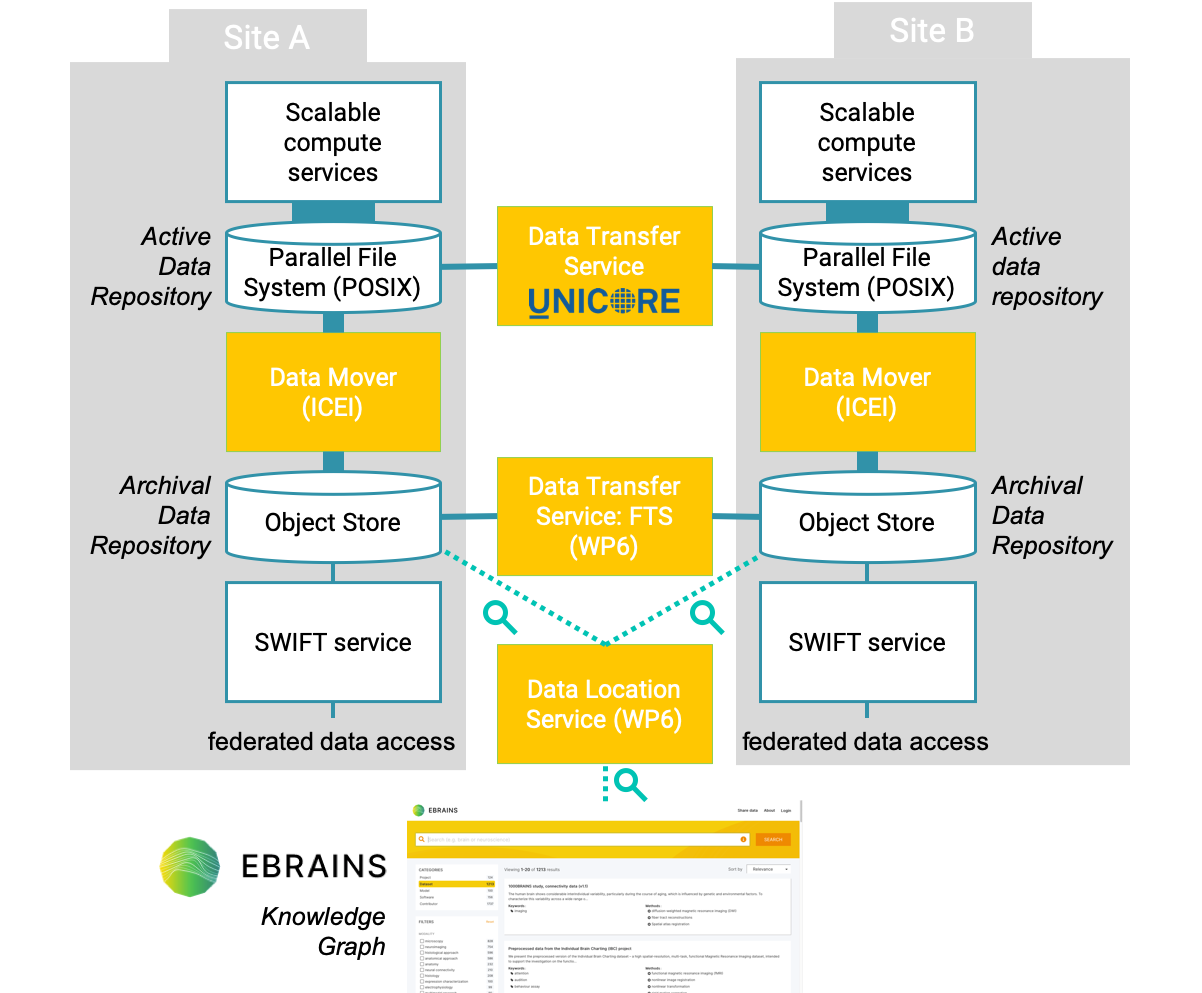

While the ICEI project was active, EBRAINS provided computing and storage services at leading European supercomputing and data centres, which are part of the Fenix infrastructure, to the neuroscientific community in Europe. These services could be requested and used separately or in combination, according to user’s needs. They included Scalable and Interactive Computing Services, e.g., for large-scale simulations and analyses, Virtual Machine Services for running customised environments, and Archival and Active Data Repositories for storing, sharing of and access to data.

European neuroscientists could apply through a dedicated, continuously open HBP-ICEI Call for Proposals. Users from other European research communities got access through the PRACE-ICEI Calls for Proposals.

Recent information on how to get access to computing and storage services can be found on the EBRAINS and Fenix websites.

For research

How EBRAINS Computing Services supported research

EBRAINS Computing services offered key functionalities for enabling research workflows in different fields of neuroscience.

Inspiring success stories of scientific projects that leveraged the computing and storage resources for their research can be found on the Fenix website.

Publications

Project publications related to high-performance computing.

View publications