- News

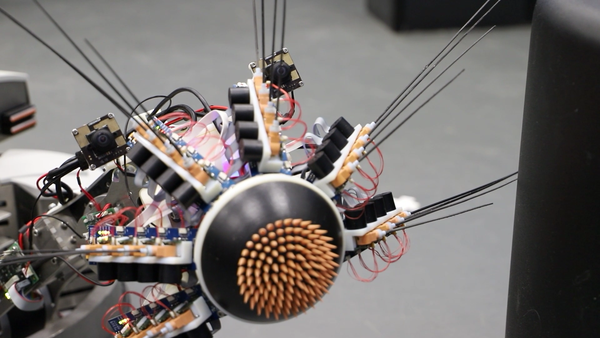

This robot explores the world like a rat - and now thinks a little bit more like one, too

28 June 2022

Whiskeye, the rodent-inspired robot capable of exploring the world by using two camera eyes and 24 artificial whiskers arranged in a mechanical nose, has now developed a cognitive model that is also inspired by organic brains. Two HBP focus areas (Work Package 2, ‘Networks underlying brain cognition and consciousness’ and Work Package 3, ‘Adaptive networks for cognitive architectures: from advanced learning to neurorobotics and neuromorphic applications’) have now collaborated to build three new coding models of perception capable of performing prediction and learning, in a way that’s similar to how a rat’s brain, or indeed our own, does it.

Previously the robot was already capable of replicating the exploratory behaviour of a rat, thanks to his array of whiskers and cameras, and navigating autonomously among obstacles. The robot has a virtual counterpart on the EBRAINS Neurorobotics platform, which can be trained in a similar fashion.

The three newly developed computational models take inspiration from biological brains, which operate in bursts of electricity (spikes) instead of a continuous flux of information. By implementing a brain-like, realistic architecture, the neuron model underlying Whiskeye’s behavior is now able to perform object reconstruction, simulate a spiking cortical column, and expand its perceptual and cognitive capabilities. “Think of what it is like to see an object like a tree”, says Cyriel Pennartz, Professor at the University of Amsterdam and senior researcher in HBP. “Not only can we feel and see a specific instance of the tree, but also understand that it remains the same tree under different viewing angles. That’s what the model is capable of now”. The next step, according to the researchers, is integrating these new models together to better understand perception and cognition.

VIDEO - A suite of models for visual perception and invariant object recognition

The potential applications go beyond replicating how a biological system perceives the surrounding world and build neuro-inspired robots. They may also offer new insights into neuronal mechanisms of brain conditions like autism or schizophrenia, which frequently involve disorders of perception and erroneous misrepresentations like hallucinations.

LinkedIn Live Interview

The Human Brain Project is presenting a series of talks with invited scientists to discuss their latest achievements in understanding the brain. The talks will be live-streamed to the Human Brain Project LinkedIn page.

On 30 June 2022 Angelica da Silva Lantyer interviewed Cyriel Pennartz, Professor and Head of the Department of Cognitive and Systems Neuroscience at the University of Amsterdam, who discussed how he and his team have connected brain-inspired deep learning to biomimetic robots.