The millisecond conversation between brain and eye

19 December 2017

Three times a second, 10,000 times every hour, your eyes saccade, jump in unison, shifting their focus from one spot in the visual field to another. And yet the world never appears to leap about or shake as it would if a camera were doing the same. Why?

Human Brain Project scientist Lars Muckli and his team at Glasgow University have shown that the brain helps achieve this smoothness by predicting what it will see next. In a study published in the Nature journal Scientific Reports Muckli and his team showed there were both feed-forward and feedback mechanisms operating in the visual cortex.

He says our brains send a signal of expectation back down the cortical hierarchy where it interacts with the input from the eye. In other words, your brain paints a continuous picture of the world based not just on what information the eye sees but also what the brain expects to see, and is surprised if it sees something different.

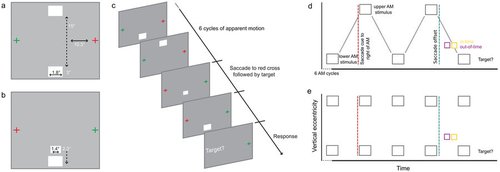

The study used an optical illusion, known as apparent motion, and fMRI imaging to show that this is happening, and the speed at which it occurs.

“This particular study has shown something very intriguing and that is that our predictions interact very quickly, three times a second, and whenever we look somewhere we carry over the expectation from the previous fixation.”

The study is a continuation of 10 years of research. Muckli says this previous research had shown what a signature of expectation and prediction error looks like.

“We set this study up such that we build up the expectation, then you make an eye movement and then the first moment after the eye movement a stimulus is presented and we could see that this was already processed as a function of the previous expectation.”

The research is useful to other areas of the Human Brain Project, says Muckli, and feeds into one of the project’s goals to understand how the temporal context modulates vision and how important expectation is in contextualising the feed-forward input.

“So what we are proposing is that a next generation of artificial intelligence and robotics could incorporate feedback cycles that include expectation in order to make vision and robotics more sensitive to expectations.”

He says arm movements in robotics could be much finer if they were feedback, as well as feed-forward, flows of information.

“In vision, if each image is processed by itself then certain ambiguities occur which wouldn’t when you take the history into consideration. Objects, foreground, backgrounds, objects that disappear, expectations of where things are in the room, which things are big and small, are all much easier to interpret if over time the processing helps to process the next condition. And the way our brains are doing this is that some kind of expectation goes back down the hierarchy and it is interacting with the input. Artificial Intelligence systems usually don’t do this, we are now proposing algorithms that do this and we propose that they will have some advantages.”

Prof. Lars Muckli is a workpackage leader in Subproject 3 of the Human Brain Project. His work aims to develop a sophisticated understanding of extensive neural interactions and to develop models incorporating information processing in a realistic theory of how we recognise objects within certain contexts.

Article written by Greg Meylan. Email: gregory.meylan@epfl.ch

Some of the science

Apparent motion is a motion illusion that can be seen between two alternatively blinking dots. The internal model perceived as a visual motion illusion alters the chance to detect a target stimulus on the apparent motion trace. (a) Apparent Motion stimulation before saccade: Subjects fixated on the red cross. Apparent motion inducing stimuli flashed in alternation to the left of fixation. (b) Stimulation after saccade: subjects fixated on the red cross, with the motion trace now presented to the right of fixation and processed by the left hemisphere. Immediately after saccade landing on the red cross, we presented a target on the apparent motion trace either in-time or out-of-time with the illusion. After the illusion ceased, we prompted subjects to respond if they detected a target or not. Visual angles are shown for main experiment (rounded). (c) Diagrammatic apparent motion trial. (d) Apparent motion stimulus timeline. (e) Flicker trial timeline. The flicker trials are the same as the apparent motion trials, but with upper and lower inducers presented simultaneously.

Read the full article in Scientific Reports